Facebook dealt with 29m abusive posts in Q1 2018

- Wednesday, May 16th, 2018

- Share this article:

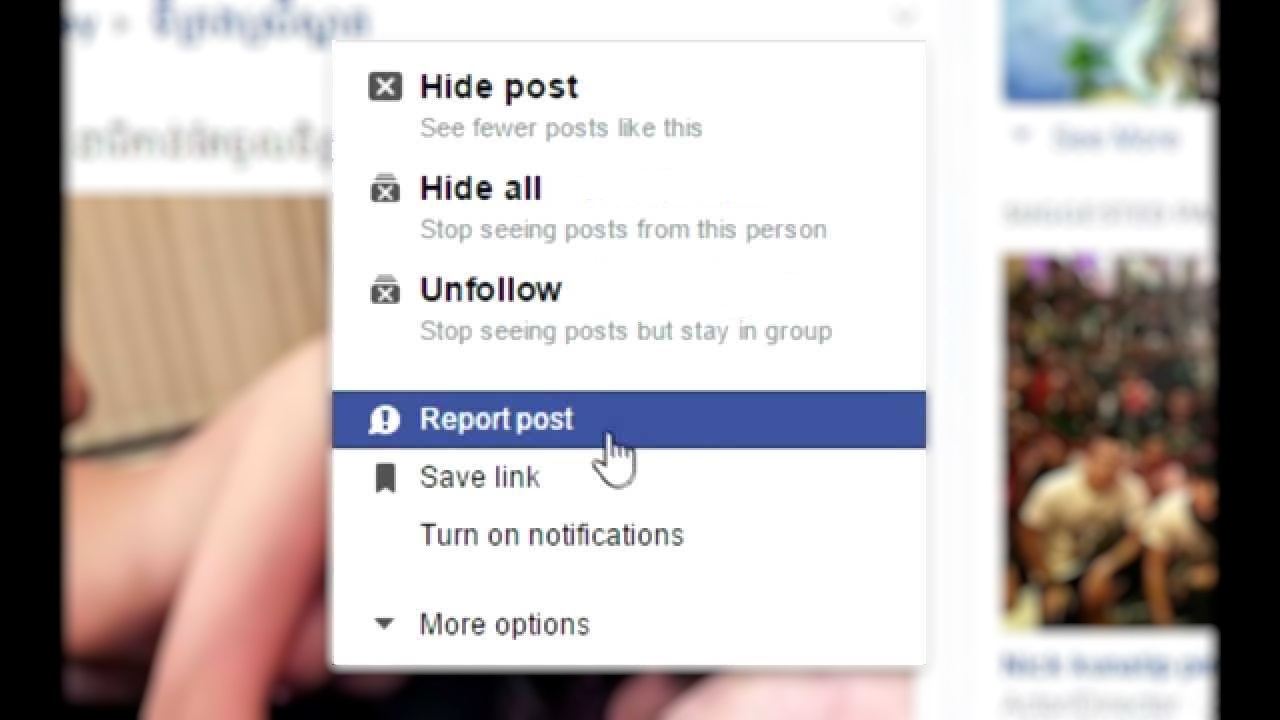

Facebook has revealed for the first time the extent of its efforts to deal with abusive posts on the social network, with 29m posts deleted or issued warnings due to breaking platform rules on hate speech, graphic violence, terrorism or sex in just the first three months of the year.

Facebook has revealed for the first time the extent of its efforts to deal with abusive posts on the social network, with 29m posts deleted or issued warnings due to breaking platform rules on hate speech, graphic violence, terrorism or sex in just the first three months of the year.

The social network is combining AI tools with human moderators in an effort to enforce its rules across the platform, but the figures revealed considerable differences in just how effective machine learning has been in fighting back against abuse.

While algorithms were able to flag 99.5 per cent of all posts made in support of Islamic State, Al-Qaeda and other affiliated groups, just 38 per cent of hate speech posts were identified by automatic tools in the same period, leaving 62 per cent that were only addressed following user reports.

The figures also showed that rule-breaking behaviour was increasing on the platform, at least compared to Q4 2017, with users more likely to have experienced graphic violence and adult nudity on the platform during Q1, compared to the previous three months. According to Facebook, it is currently unable to offer similar stats for hate speech or terrorist propaganda.

“As Mark Zuckerberg said at F8, we have a lot of work still to do to prevent abuse,” said Guy Rosen, vice president of product management at Facebook. “Its partly that technology like artificial intelligence, while promising, is still years away from being effective for most bad content because context is so important. In addition, in may areas – whether its spam, porn or fake accounts – were up against sophisticated adversaries who continually change tactics to circumvent our controls, which means we must continuously build and adapt our efforts. Its why were investing heavily in more people and better technology to make Facebook safer for everyone.”

The report also found that 837m pieces of spam were removed in Q1 2018, nearly 100 per cent of which was found and flagged before having to be reported. According to Facebook, the key to this was fighting fake accounts, 583m of which were disabled in the same period, most of them within minutes of registration. Despite these efforts, and the millions of fake accounts which are prevented from every registering, Facebook still estimates that around three to four per cent of active Facebook accounts during this period were still fake.